The Human Premium: Two Questions That Determine What Stays Human In Procurement

Not everything needs a human. Here's how to tell what does.

If you read last week’s post, you’re left with an uncomfortable question:

“If what stays human in procurement is determined by stakeholders rather than by the function itself, how does a practitioner actually figure out where the line falls?”

It’s one thing to accept that your relevance is shaped by the people you serve. It’s another to know what to do about it.

This week, I want to give you a tool for answering that question: For each key outcome, how do we determine what stays human and what doesn’t?

Why The Matrix Isn’t Enough

Of course, one approach could be to apply the Human Edge Matrix to each outcome, which I discussed in this post a couple of weeks ago (along with an interactive tool that you can use to assess how vulnerable your own role is to AI).

However, the Matrix was designed to classify work at the role and task level; it tells you whether a specific activity should be automated, augmented, or kept human.

For example, I’d posit that outcome #1 (Speed and Responsiveness of the Procurement Process) can be almost entirely machine in its execution (perhaps with some/limited human involvement where needed) while outcome #8 (Crisis Management) is one that will almost certainly remain fully human (even if those humans are somewhat augmented with AI.

But, in between these extremes - depending on the individual company situation (influenced by everything from its market, competition, financials, category focus, etc.) - all eight factors in the matrix could run the gamut from human to nonhuman.

What we need now is a complementary lens that operates at the outcome level and answers the question: “For this outcome, does human involvement change what the stakeholder receives?”

Two Questions That Draw The Line

So what is the right way to understand what stays human at the outcome level?

At least at the Internal Customer level, I’d suggest two core questions need to be answered:

Does human involvement produce a value premium that justifies the cost AKA “Am I making this better”?

This question is purely economic: is the outcome measurably better, or perceived as meaningfully more legitimate, when a human is involved? And is that difference worth what the human costs?

This covers a host of considerations including:

Relative quality differentials i.e. is there a material quality differential between the ‘human only’ versus ‘human plus machine’ versus ‘machine only’

Business partner requirement - does the work require Procurement to partner with the stakeholder to arrive at an optimal solution? Does he/she bring advisory value to the table?

Experience levels - Does the practitioner bring a depth of experience and insight that makes a difference?

Does accountability require a human AKA “Does someone need to own this”?

This question isn’t about whether AI can “do the analysis” but “can the organization accept a decision where no human bore the responsibility?”

This covers a host of issues including:

Regulatory sign-off

Ethical and/or value-based oversight

Complexity that demands multiple human eyes (for validation or risk mitigation) and,

Situations where someone needs to be personally answerable for the outcome.

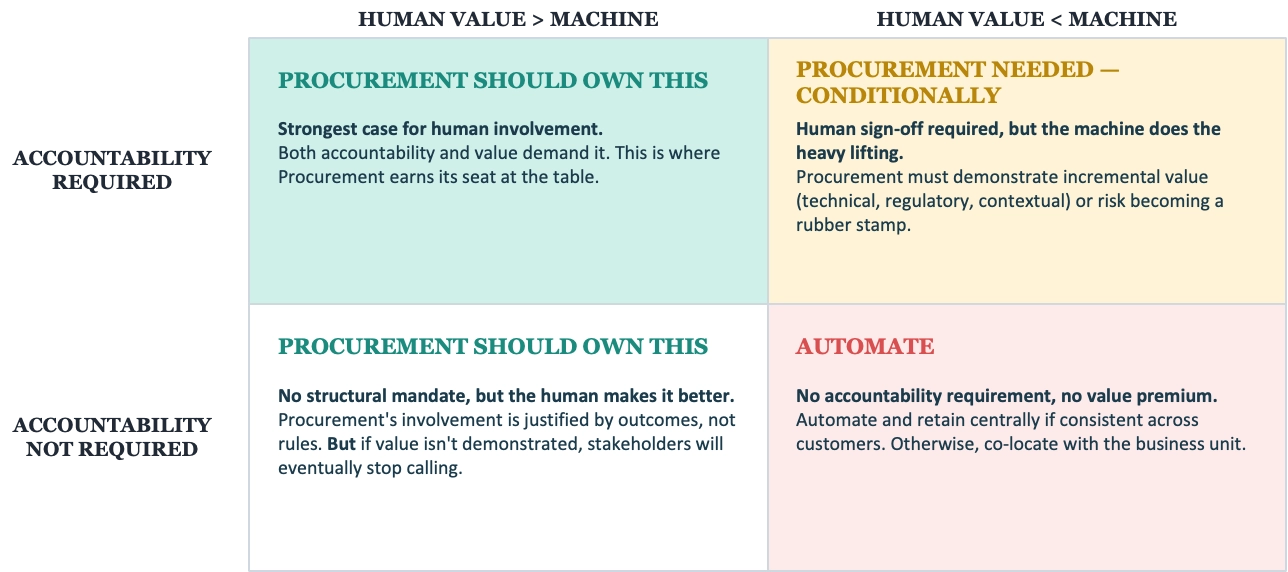

This allows us to create a simple 2x2 that connects naturally to the Human Edge Matrix as a complementary lens rather than a competing one: Accountability required / not required on one axis, value premium present / not present on the other - giving us four quadrants, each with a clear implication for the internal customer:

Accountability Required:

Human Value > Machine Value: Procurement should handle

Human Value < Machine Value: Procurement needed ONLY if it can show material, incremental value: technical knowledge, regulatory understanding, etc. otherwise stakeholders stop calling (risk of rogue duplication)

Accountability Not Required:

Human Value > Machine Value: Procurement should handle

Human Value < Machine Value: Automate and retain with Procurement IF there is consistency across customers, ELSE co locate with customer

The framework should be applied at two levels and at different cadences:

The Category Strategy level:

When a CPO or category leader is designing or redesigning how a category operates, they apply the 2x2 to the outcomes that matter for that category.

This is a periodic, strategic exercise. You do it when you’re setting up the category strategy, and you revisit it when something material changes e.g. new regulation, new technology capability, a shift in what the business expects from that category.

The point is not to be recalibrating constantly, but factoring in this analysis at deliberate review points.

The Exception/Escalation level:

In day-to-day operations, the default mode is whatever the category strategy determined.

But specific situations will arise that challenge the default: a supplier relationship that was fine on autopilot suddenly needs human attention because of a quality failure or an internal stakeholder who was happy with automated reporting now needs human counsel because they’re facing a board question about supply risk.

These are the moments where a practitioner applies judgment about whether the current situation has shifted the accountability or value-premium calculus. They use the two questions as a gut-check: has something changed about who needs to be accountable here, or about whether my involvement changes the outcome?

The Framework in Practice

Let’s apply this framework to three different scenarios:

Scenario 1:

Your VP of Manufacturing needs to consolidate your packaging supply base from five suppliers to two

This impacts the Total Cost of Ownership and the Supply Resilience outcomes.

The AI can do a lot here - spend analysis, supplier performance scoring, TCO modeling across the five suppliers, scenario modeling for different consolidation options and more. And it can do all of this faster and more comprehensively than any human analyst.

But let’s apply the two questions:

Does accountability require a human?

Yes - consolidating from five to two suppliers is a decision that increases concentration risk. If one or both of the remaining two suppliers fail, production is materially impacted.

Someone needs to own that call, explain the rationale to the plant director, and be answerable when the board asks why the company is now dependent on two packaging providers instead of five.

No organization is going to accept “the algorithm recommended it” as an answer when a production line goes down.

Does human involvement produce a value premium?

Yes - but not where we might expect. The analytical work (spend modeling, TCO calculations, performance benchmarking) is exactly the kind of structured cognitive work that AI does well and arguably better than humans.

The value premium shows up in the judgment calls the data can’t make: which two suppliers have the management quality and financial stability to handle twice the volume? Which ones will invest in our relationship if we double their share? How will the three suppliers we’re exiting react — will they become hostile in other categories where you still depend on them or will there be political/social implications?

Those are questions that require contextual insights (market, relationship and commercial) that no model currently possesses.

This lands in the top-left quadrant: accountability required, human value premium present. Procurement should own this. But notice that the analytical work within this outcome can and should be machine-augmented. What stays human is the judgment, the stakeholder conversation, and the accountability for the decision.

Scenario 2:

Your Chief Compliance Officer needs assurance that a new raw materials supplier in SE Asia meets the company’s labor standards and environmental commitments before the first PO is issued.

This impacts the Compliance and Ethical Assurance outcome.

AI can do a significant amount of the legwork - screening the supplier against sanctions lists, pulling public records, analyzing ESG ratings from third-party databases, even scanning news sources for red flags. In fact, the machine will almost certainly be more thorough and faster at this screening than a human would be.

But again, let’s run the two questions:

Does accountability require a human?

Yes - this is one of the clearest cases. Regulatory frameworks increasingly require demonstrable human oversight of supply chain due diligence decisions.

Beyond the legal requirement, there’s a governance reality: if this supplier ends up on the front page for labor violations, someone in the organization needs to have signed off on the decision to onboard them.

“We ran the algorithm and it came back green” is not a defense that any general counsel will accept.

Does human involvement produce a value premium?

Only partially - this is where it gets interesting. For the screening and data-gathering work, the machine is arguably better than the human. It’s more comprehensive, less prone to oversight, and doesn’t get fatigued reviewing supplier questionnaire number forty-seven.

But the human value premium is there - even if it is narrow but critical. It shows up in the interpretation of ambiguous signals (the supplier’s ESG score is acceptable but their audit history shows a pattern of just-in-time remediation before inspections), in the ethical judgment calls (the data is technically compliant but something doesn’t feel right), and in the conversation with the CCO where someone needs to say “I’ve looked at this and here’s my assessment”.

This lands on the top-right quadrant BUT somewhere towards the left: accountability is required, but the machine does most of the heavy lifting. Procurement is needed, but primarily for the sign-off, the judgment on edge cases, and the ability to stand behind the decision. If the procurement person is simply rubber-stamping what the AI screening tool produces without adding interpretive value, the function is at risk of being reduced to a compliance checkbox.

Scenario 3:

Your Head of R&D wants to explore whether any of your existing chemical suppliers could reformulate a key input to reduce costs and improve sustainability - but she doesn’t know which suppliers have the capability or the willingness.

This is the Supplier-Enabled Innovation outcome.

And it’s worth noting what AI can and can’t do here. AI can scan supplier capability databases, analyze patent filings, identify which suppliers have R&D facilities working on relevant chemistry, and even draft an initial outreach brief. All of which is useful groundwork.

So let’s, then, run the two questions:

Does accountability require a human?

Not really - at least not in the regulatory or compliance sense.

Nobody is going to get fired or face legal consequences for how the innovation exploration was conducted. There’s no structural mandate for human sign-off on “let’s have a conversation with Supplier X about reformulation possibilities.”

This isn’t a risk or governance question.

Does human involvement produce a value premium?

Overwhelmingly yes - this is perhaps the clearest case of human value premium across all seven outcomes. Innovation from suppliers doesn’t happen because you send them a brief.

It happens because a procurement professional who has built trust with the supplier’s technical team over years picks up the phone and says, “I think there might be something here. Can we get your head of applications science in a room with our R&D director?” It happens because the procurement person understands both sides well enough to see the connection that neither party would see on their own. It happens because the supplier’s commercial director is willing to invest internal resources in the exploration because she trusts the procurement person’s judgment that this company will actually follow through (and not just run a free innovation workshop and then give the business to a cheaper competitor).

No AI can replicate the relational capital, the cross-organizational pattern recognition, or the credibility that makes a supplier say “yes, we’ll invest our best people in this.” The machine can identify the opportunity. The human creates the willingness.

This lands in the bottom-left quadrant: no accountability requirement, but strong human value premium. Procurement should own this - but there is a nuance: it has to earn it. There’s no structural mandate keeping this work in procurement. If the R&D director doesn’t believe the procurement person adds value to her supplier innovation conversations, she’ll go directly to the suppliers herself. Procurement’s ownership of this outcome is justified entirely by demonstrated value, not by rules or policies. And that makes it both the most rewarding and the most fragile kind of human work.

(It’s worth dwelling a little on this last point: The work that has the highest human value premium but the lowest accountability requirement is the work practitioners most need to protect. Unless they are genuinely, demonstrably good at it, there will be no requirement to keep it with Procurement and/or work with the function.)

The Supplier’s Test Is Simpler - But No Less Important

You’ll notice I mentioned earlier that the 2x2 is primarily a tool for thinking about the internal customer relationship. So what about Procurement’s other prime stakeholder: the supplier?

Well, the supplier/market frame operates on a different logic.

When a supplier evaluates whether they need a human counterpart in Procurement, they’re not running an accountability-versus-value-premium calculation. They’re asking something more fundamental:

Does this person have the authority to commit?

Do they understand our business well enough that I don’t have to re-explain our constraints every quarter?

Will they still be here next year, or will I be rebuilding this relationship from scratch with their replacement?

In other words, the supplier’s test for what stays human is about continuity, authority, and contextual depth. For the supplier, there is real signaling value in human engagement.

Because the fact is that a supplier will accept automated purchase orders, automated invoice processing, and even automated performance measurement.

But what they won’t accept - at least for relationships that are important to them - is a rotating cast of humans with no institutional memory, or worse, no human at all when they need to have a difficult conversation about pricing, capacity, or priorities.

This is, therefore, a simpler assessment than the internal customer 2x2, but it carries its own implication: the human work that matters most on the supplier side is relational infrastructure.

And relational infrastructure takes time to build, is easy to destroy, and impossible to automate.

Four Things The 2x2 Doesn’t Show You

First, decision making cannot be a cold process.

We are humans after all, and hence have to make reasonably human decisions. And so there is a rational case to be made that, in many instances, human involvement has economic value precisely because people aren’t rational about it. The empathy research discussed in the last post shows us this: the “human empathy premium” is an economic fact, not a sentimental plea.

Second, none of the above should be seen as an argument to reduce the practitioner to becoming a passive recipient of stakeholder judgment.

“What stays human is what your stakeholders demand to be human” can be seen as disempowering but that is not at all the point. The practitioner has the opportunity to shape stakeholder expectations rather than merely respond to them, because the best procurement professionals don’t just answer what stakeholders ask for, they influence what stakeholders think they need.

This means orchestration and judgment and creativity and outcome orientation beyond the traditional cost savings rubrics.

Third, what happens when the two stakeholder groups - internal customers and suppliers - disagree?

A business unit might be perfectly happy receiving an AI-generated market analysis and never speaking to a procurement person. But the supplier on the other end of that same category might need human engagement for the relationship to function e.g. contract renegotiations, performance conversations, innovation discussions, etc.

The reverse is also possible: a supplier might be fine dealing with automated PO systems while the internal stakeholder insists on a human procurement partner for strategic advice.

These situations will occur, and it’s worth acknowledging that the two frames can produce conflicting signals and that navigating that conflict is itself irreducibly human work.

Finally, we have to note the temporal factor. That is, things do and will change.

Whatever our analysis shows today (especially about AI’s current capability thresholds), its worth noting that that is the current view. It’s incumbent on us to keep reading the signals as the window moves.

The Real Point

The point of this framework is not to identify what’s ‘irreducibly’ human, because that line will keep moving as AI improves. The point is to identify what’s economically and organizationally irrational to hand to a machine, even if you technically could.

So it’s worth asking two questions: Does someone need to own this? Am I making this better?

If the answer to either is “yes”, the work stays human. If the answers to both are “no”, it doesn’t, regardless of tradition, comfort, or sentiment.

And if you’re sitting in a quadrant where accountability isn’t required and your value premium is the only thing keeping you relevant, you need to see that as a signal, not a safety net.

Because your premium has a shelf life. The question is what you’re doing to extend it.