How to Use AI Without Losing Judgement

A practical playbook for preserving cognitive agency in an AI-Enabled Workplace

In my last post, I flagged the idea of cognitive debt, which is the hidden cost we incur when a tool helps us complete a task without making us do enough of the thinking to truly understand, evaluate, or reproduce the outcome ourselves.

I also discussed how, if this debt goes unpaid, it leads to a loss of cognitive agency, which is the ability to understand the reasoning behind an outcome, assess whether it is sound, adapt it when needed, and take real ownership of the judgment (and output) involved.

This loss is a real problem, particularly in a world where we must grapple with information (and cognitive) overload, an attention economy, as well as performance metrics (explicit and implicit) that reward volume and activity over quality and individual development. The proliferation of AI tools is only exacerbating this problem, taking it to another level entirely. As I mentioned in my prior post, it’s the difference between creating sounds and developing musicianship.

And yet these tools aren’t going anywhere. Stand alone or embedded within more traditional SaaS tools, AI is becoming ubiquitous and will soon reach the point where it is no longer a feature but an expectation.

Used thoughtfully, they will dramatically enhance both our efficiency as well as our effectiveness - but only if we take the initiative. The onus remains on us to use them thoughtfully, not only for the betterment of our workplaces but for ourselves as well.

In this post, then, I’ll outline a practical framework for using AI in ways that preserve cognitive agency rather than erode it.

The Cognitive Agency Framework

At the heart of this framework is a simple principle:

Use AI to reduce mechanical effort, not to replace formative judgment.

But while this idea is simple in concept, it’s much more involved in execution. There is no point solution when it comes to the problem of minimizing cognitive debt and retaining our cognitive agency.

Our approach has to be multi-faceted - from how we lead to the parameters we set for it to what we individually must do. This is not a tool problem as much as it is an operating model problem.

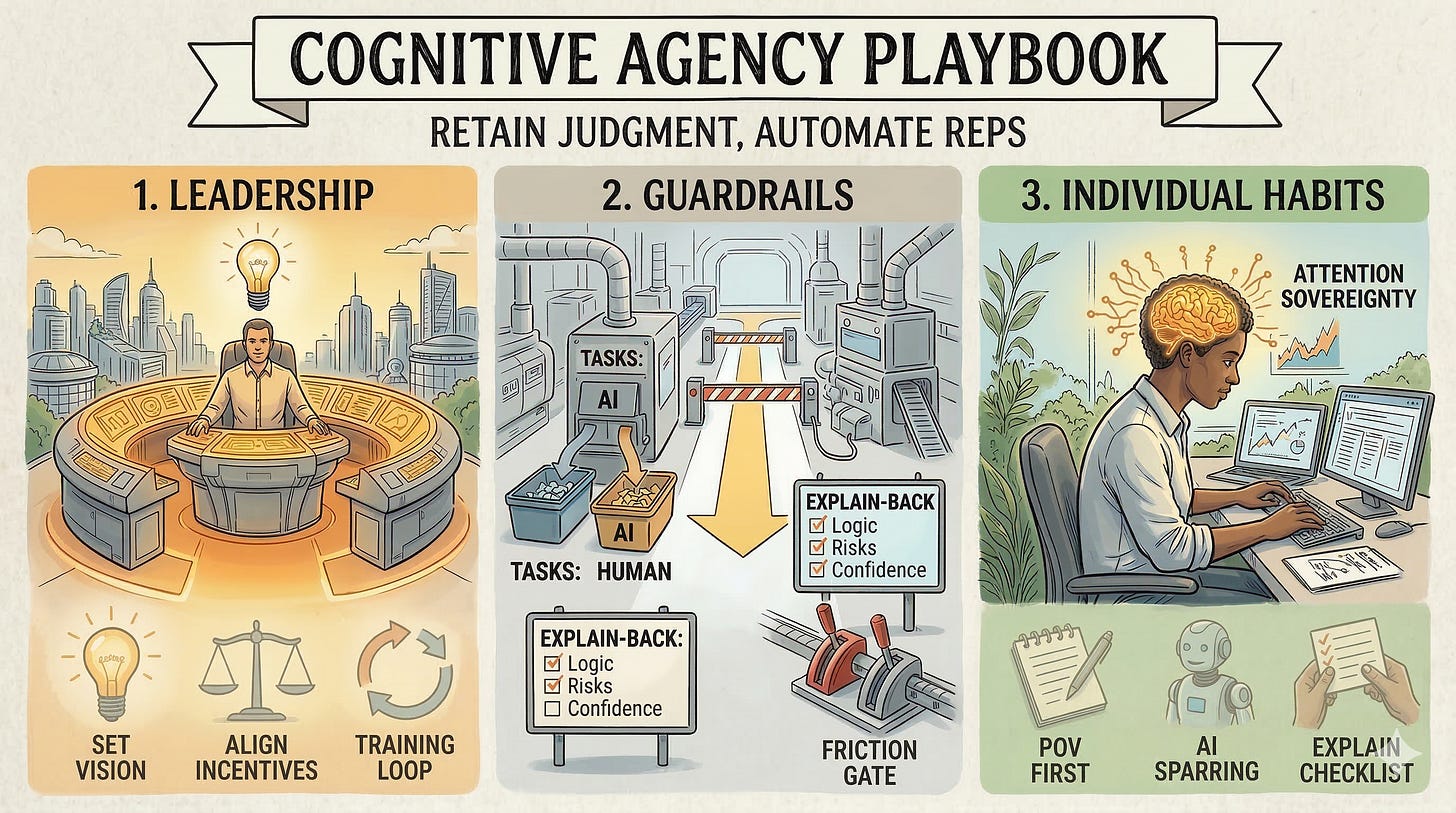

And this operating model - The Cognitive Agency Framework, as I call it - has three layers:

Leadership (conditions)

Guardrails (workflow rules)

Individual Practices (specific habits)

Let’s look at each of these in turn.

Layer 1: Leadership Sets the Conditions

Everything starts with leadership, which must set the stage for their teams at the outset. The central question they need to address is:

“How do we use AI to capture efficiency gains without removing the cognitive reps that build and preserve judgment?”

There are four specific actions they should take:

Establish the Vision:

Clarify the kind of practitioner the organization will value going forward. That is, enabled by AI tools, practitioners must:

Move from task completion to outcome ownership, where judgement and defensibility will become the differentiators

Remain focused on driving effectiveness as much as efficiency, which prioritizes achieving results for (internal) customers and moving the needle on their metrics.

Align incentives:

If leaders only rewards efficiency metrics such as faster turnaround, more output or shorter cycle times, then they will end up (accidentally) training people to maximize AI throughput, not human discernment.

Instead, they should balance these metrics (which will still be important) with more ‘human’ metrics that measure and reward on the basis of the progressive vision laid out in point 1. Specifically:

Measure for impact, not simply process compliance

Recognize innovation and progressive thinking in annual evaluation cycles

Reward reasoning quality and assumption strength, including risk awareness, contextual judgment, defensibility and trade-off understanding

Reward practical, sustainable AI deployment e.g. embedding AI into workflows

Build the Training Loop:

Early, heavy AI reliance can weaken independent reasoning, ownership, and recall so it’s imperative to ensure that team members put in the mental reps needed to build their cognitive scaffolding.

In other words, the key is to ensure that “judgment reps” are baked into the work itself.

So set the expectation that each team member (in particular, junior team members) must have practical experience in “doing the work” (for specific types of work - see the next section on Guardrails) and not let AI remove ‘first-principle’ practices.

This means, therefore, deliberately creating “unassisted reps” - for example, when it comes to issue framing or contract risk spotting, and even recommendation writing.

For junior team members, this could be having them alternate between a solo first pass, an AI-assisted revision and then a (senior) human review.

Broadcast Learning:

Every team will have individuals who are leading the charge when it comes to innovation and AI deployment. It’s important to identify and encourage these early ‘champions’.

But long-term value for the function will only come from scale, and that starts with strong communications and information sharing. The more people find out about the potential, the early wins/realized value and the personal upside, the higher the chances of success.

It’s important, then, to communicate broadly, which means:

Hold regular town halls to share AI wins, relevant use cases and key lessons learned

Invite demos by Builders to show the potential and value of current and emerging tools

Host hackathons on critical functional issues

Provide peer recognition, including symbolic as well as cash prizes

Layer 2: Guardrails Shape the Workflow

With the leadership posture defined, it’s important to set appropriate guardrails that help ensure intent turns into behavior.

This requires defining the specific rules that need to be put in place to ensure team members work with AI tools in the best, most optimal way. Five key guardrails should be stipulated:

Classify Tasks:

In terms of allowing AI to run autonomously, what kind of work is OK versus what is not OK?

Not all tasks should be treated the same; some need little to no attention while others need a human to keep driving it. As such, separating autonomous versus assistive versus formative work is an essential first step.

Some tasks - such as formatting, information organization, summarizing, first-pass drafts, etc., are good candidates for heavier AI use.

Others are formative in nature because they build or preserve judgment. Think:

Framing the problem

Identifying what’s missing in an analysis

Making trade-offs in difficult situations

Deciding what matters in moments of ambiguity

Defending recommendations

These are tasks where humans need to stay in the loop. (No one is going to ask the algorithm for the justification of the decision. They’ll ask the human who put it forward.)

As a practical rule, then, create a team-level list of:

Tasks where AI can lead

Tasks where humans must lead

Tasks where AI can support but not substitute

Document this in a one-pager by workflow, potentially in an “AI RACI” format. This document should be reviewed at regular intervals (quarterly or semi-annual at minimum) as models and tools evolve.

Ensure No AI-First for Judgement Tasks:

For specific tasks that require human judgement and involvement, stipulate that a human must always do the first pass. AI cannot be allowed to initiate the ‘thinking’.

Embed the idea that people must think before they prompt, fleshing out their ideas, the core problems, constraints, etc. This brief doesn’t need to be a thesis, it can be relatively brief. But the discipline must be demanded; there needs to be a reasonable level of ideation and thought provided by the human as the first step.

Post that first step, AI can then be utilized as the next step of assessment and input.

Use AI as Challenger:

Never use the AI as a substitute or as the sole author of the output. Instead, treat is as a sparring partner. Reiterate that AI should be used to:

Expand options

Stress-test thinking

Surface blind spots

Improve articulation

Embed “Explain-Back”:

Let team members know that they will be expected to explain the bases and implications of their analyses. Let them know that they will be asked whether they are comfortable owning the outcomes of their assessment and why.

What is the decision?

What trade-off is being made?

What would make this wrong?

What information is missing?

What is their level of confidence and why?

If someone cannot defend their AI-assisted output, they cannot own it.

Insert Friction Gates:

Well-placed friction preserves thinking quality.

For high stakes categories and key process points that matter, clarify that team members will be asked to present key findings and rationalize their thinking.

These key insertion points should be defined, and could encompass:

Award decisions over $X

Contract deviations that shift liability/indemnity/termination

Negotiation postures and walk-away thresholds with strategic suppliers

Risk acceptance (cyber, continuity, regulatory)

External communications that could create reputational exposure

For particularly critical decisions, require red-teams be involved to challenge the analysis (e.g. “make the case against this” and ask the team to defend their point of view).

The key here is simple: to raise the “cost of cognitive offloading” - when this cost rises, people offload less, retain and own more.

Layer 3: Individuals Build the Habits

Of course, the rubber meets the road with the individual. While the guardrails define the rules, individual practices determine whether a team member actually builds judgement.

As such, each individual’s goal must be to master ‘Attention Sovereignty’, that is, to actively direct attention rather than surrendering it to the algorithm. Attention sovereignty is the precondition for everything below.

Four key habits are essential here:

Develop Your Point of View First:

One of the biggest protections against cognitive debt is requiring human pre-processing before AI enters the picture. So ask people to think before they prompt.

For example, before using AI, require the user to first write:

the problem statement

the desired outcome

the likely risks

their own first-pass recommendation

Even a brief set of notes or hypothesis or key framing questions and ideas helps. Start with a human frame first.

Use AI as Sparring Partner:

With the human frame fleshed out (even at a high level), use AI as a challenger, expander, and/or editor.

The safest pattern to deploy is not “do it for me” but to iterate with you. Ask it to work with you as a consultant or analyst. Ask it to:

Challenge your assumptions

Identify three risks you may be missing

Critique your recommendations

Provide you with alternative considerations

Test your logic for holes

Help you compare different scenarios

At the same time, thoughtfully evaluate what it gives you back, and test its thinking to ensure that what it’s telling you is something you agree with. Ask it questions, pressure-test key statements and ideas, push back where you feel push-back is needed. Take nothing for granted.

This preserves human ownership of the core judgment while still harvesting the benefits of the tool’s speed and breadth.

Build explain-back discipline by using a checklist

This is a simple safeguard but also a powerful one. For every key analysis that leverages AI heavily, be able to explain:

What the recommendation is

Why it makes sense

What assumptions it depends on

What the risks are and where could it fail

What you changed from the AI output

The practical rule to follow is:

“I will not submit an AI-assisted recommendation until I can fully defend it my own words.”

Engage in Regular Self-Critiques:

Regularly reflect on your thought processes, biases, and how you learn. Consider how you can continue to push your thinking and ownership of your work and analyses.

Make a note of the various tools you utilize and their relative strengths and drawbacks. Establish personal “red-flag” triggers about when to use AI and when not to, based on individual usage.

Adopt a two-source rule for high-stakes facts. Treat AI as a draft, not a source. Be selective about facts provided by AI tools and verify critical claims against primary documents or an independent reference.

Reconstruct from memory. After using AI, restate the reasoning without looking. If you can’t explain it cleanly, you’ve borrowed output without building understanding.

The Procurement Cheat Sheet For Leaders

The three layer Cognitive Agency Framework is a useful way to think about retaining cognitive agency, both as a leader and an individual practitioner. But it does require a good measure of work to ensure it’s implemented effectively in any organization.

While in my view, the realized value is worth that work, I appreciate that ‘speed to action’ is more important than ‘perfect implementation’. To that end, if you do nothing else then, at minimum, implement the following four rules of thumb:

Rule 1: Define “When AI Should Be Avoided”

Some contexts are fragile and the downside of a subtle error can be asymmetric, in that small mistakes can create outsized legal, financial and/or reputational consequences.

As such, heavy AI reliance is best avoided in specific defined contexts. These could include:

Sensitive negotiations

Legal commitments without counsel review

Reputational risk communications

Compliance/regulatory issues

Situations requiring confidential data handling policies

Rule 2: Preserve Human-First Reps in Judgment-Heavy Workflows

Make clear that Humans must think first and foremost when it comes to Judgement-heavy workflows, which you should spend a little bit of time thinking through. These workflows could include:

Supplier selection/award situations

Risk acceptance decisions

Negotiation strategy and walk-away scenarios

Contract deviations and redlines that shift risk

Stakeholder trade-offs and prioritizations

Financial/business case assumptions

Rule 3: Let AI Challenge and Draft, Not Decide

Have your team develop the first draft of any analysis or output. AI can then assist by:

Summarizing bids

Drafting supplier emails

Structuring comparison tables

Synthesizing documents

That said, the human should still own:

Supplier selection logic

Trade-off decisions

Stakeholder balancing

Risk acceptance

Negotiation posture

Rule 4: Require Defense, Not Just Delivery

A recommendation must not be considered complete until the owner can explain the:

Logic

Risks

Alternatives rejected

Contextual factors

This ensures the organization is developing professionals, not simply ‘output assemblers’.

Closing Thought

AI is going to keep getting better. The real question is whether we (as leaders and as individual practitioners) will get better with it.

If leaders reward throughput, teams will optimize for throughput. If workflows don’t require defendable reasoning, people will stop building it.

Cognitive agency won’t survive on its own or by some accident. It will survive by design: when our systems make thinking both mandatory and unavoidable.

It’s incumbent then on leaders to set the conditions and establish the guardrails that shape the workflow, and for individuals to practice those habits that keep their judgment sharp.

This is the aim of the Cognitive Agency Framework. Use AI to remove mechanical effort, but protect the cognitive reps that build discernment. Otherwise, we’ll ship polished outputs but, over time, lose the capability underneath them.

The ultimate goal is simple: capture speed without surrendering musicianship.