Cognitive Debt: The Hidden Cost of Letting AI Think for Us

Why The Age of AI Demands More, Not Less, Discipline in How We Think and Work

AI is an incredible technology - one that’s taken the promise of classic SaaS and amplified it exponentially.

We now have tools that can help us produce better-looking work, faster, and with less friction than ever before. That’s a real and massive gain, but it’s one that comes with a hidden risk: these very same tools make it easier to skip the mental work we need to be doing to truly own our work. Or, to be more precise:

What happens when the tools that improve our outputs also reduce the amount of formative and evaluative thinking we do to truly understand, evaluate, and own them?

Creating Sounds vs. Developing Musicianship

Let me explain this with an analogy from the music world.

A couple of decades ago, if you wanted to record an album, you had to learn to play an instrument, put together a band, hire out a studio, bring in a producer and an engineer, record multiple takes and then piece together and master the final output.

Today, you can bypass much of that. Modern technology has made it easier than ever to create something that sounds polished. Synths, virtual instruments, multitrack recording, editing software, and now a host of AI tools that do all of the above with just a prompt, help generate impressive results in a fraction of the time.

And yet, there remains a difference between creating sounds and developing musicianship.

Musicianship means developing a sense of taste, timing and feel. It means having an ear for what works, understanding when to elevate tension and when to release. It means developing an understanding of which elements work together and why.

Developing musicianship requires doing the work. But creating sounds? Today, anyone can produce something that sounds good without developing the underlying fluency needed to create, diagnose, adapt and finalize with intention.

This same distinction is playing out in other spheres as well, including Procurement knowledge work. And that presents us with a challenge.

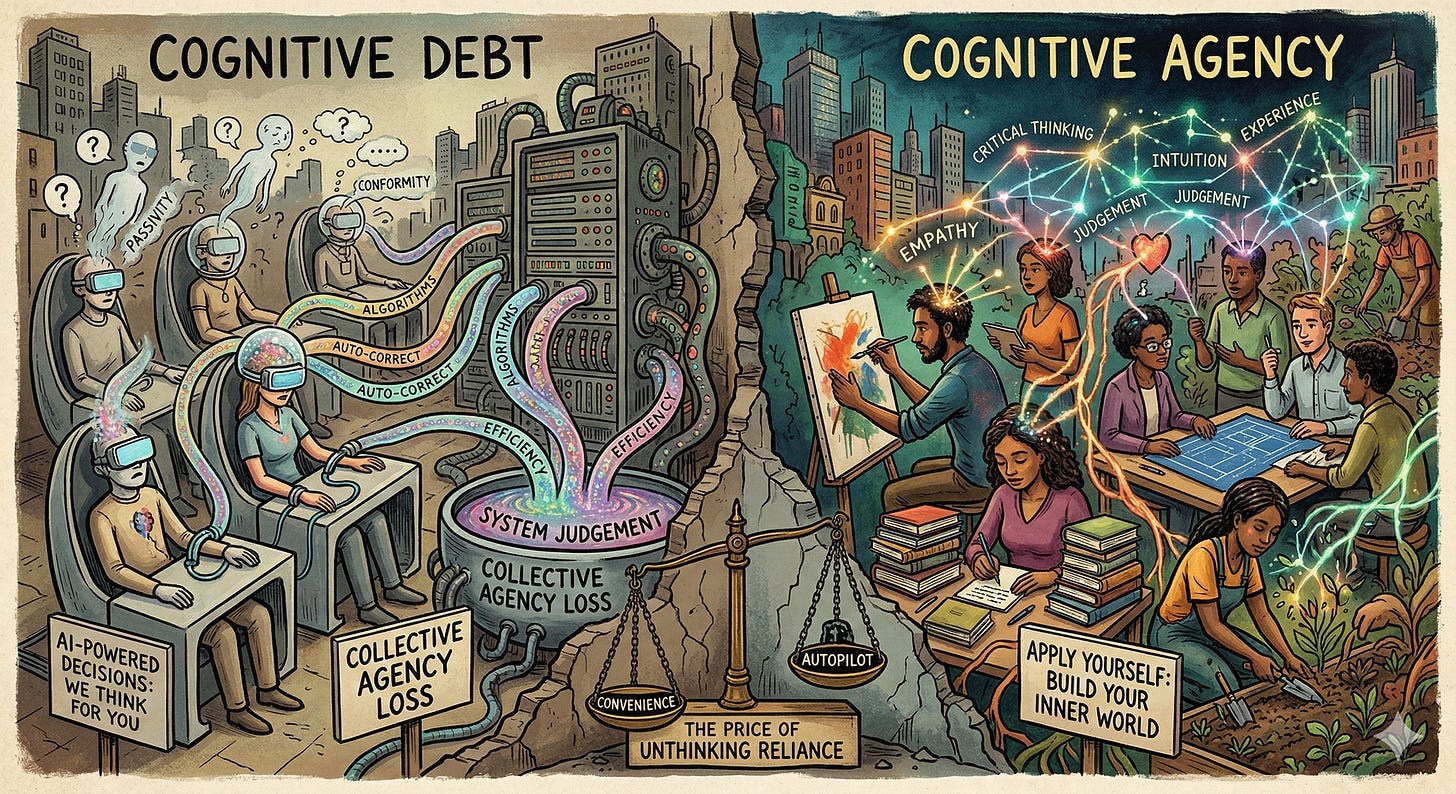

Cognitive Debt and the Erosion of Agency

In Procurement, we also have access to a host of incredibly powerful AI tools, but their deployment is all across the map.

I don’t just mean their usage but the intentionality of that usage. Some are using them well, others less so (often because these tools sound so authoritative and confident that users mistake their polished language for sound judgement).

This undisciplined and unintentional deployment in our knowledge work comes with a cost. It creates a cumulative risk: repeated cognitive outsourcing creates Cognitive Debt which, over time, erodes our Cognitive Agency.

Let me explain both of these terms briefly.

Cognitive debt is the hidden cost we incur when a tool helps us complete a task without making us do enough of the thinking to truly understand, evaluate, or reproduce the outcome ourselves. It’s a real problem because the convenience of today’s tools can, if we’re not careful, result in a complacency of understanding. That is, convenience borrows against comprehension, with very real, very material trade-offs.

The result, if this debt continues to go unpaid, is a lack of cognitive agency, which is the ability to understand the reasoning behind an outcome, assess whether it is sound, adapt it when necessary, and take real ownership of the judgment involved and, hence, the output generated.

This ‘cognitive offloading’ has real and practical implications: these tools might improve immediate task performance, but they do so while also reducing retention and internal encoding (i.e. weakening the depth of neural processing required for learning and recall), especially when our goal is to ‘grow’ as much as it is to just ‘get the work done’.

Why This Matters in Procurement

Now, this might sound like a problem for just the new entrants into the function, but it’s really a problem for all practitioners, junior and senior alike.

For juniors, the risk is failing to build the foundation of what makes for true, high quality performance in the long run. No ‘cognitive scaffolding’ being built in the first place, no formation of those foundational thinking skills that are so essential to ‘good judgement’.

For experienced practitioners, the risk is a loss of sharpness, because over-reliance and over-delegation can lead to complacency and declining vigilance. Passive reliance is never a good thing.

(And for organizations as a whole, the risk is that we normalize all of the above, with long term detrimental impacts.)

This matters especially in Procurement because our ability to apply good judgement and make strong decisions that serve the corporate good, even as we grapple with a multitude of competing agendas and demands, is what defines our success. Our work isn’t just about producing outputs but generating better outcomes.

In that regard, AI can help us draft, summarize, analyze, and recommend but it cannot, by itself, deliver real procurement judgment. We still need discernment. We need the ability to read a stakeholder or interpret the nature and magnitude of a particular risk. We need to be able to make real-time, thoughtful, conscientious trade-offs. For example:

Summarizing a contract is not the same as understanding its comparative risks in context of organizational realities

Generating a sourcing recommendation is not the same as exercising true commercial discernment that incorporates the nuances of the situation

Producing a negotiation script is not the same as reading leverage, timing, and context

The fact is that Procurement decisions are often made under ambiguity, across competing stakeholder incentives, with incomplete information and real commercial consequences. So our judgement matters.

And even putting aside the idea of ‘low quality outputs that play at being correct’ (due to hallucinations (still a concern) and errors that will surely diminish as the tech continues to improve), if we don’t own the work, then what is our value?

It’s also worth asking ourselves: If we’re no better than the machines, then why does the organization need us at all?

The Real Question

Look, none of this means cognitive offloading is inherently bad. In many contexts, it’s rational and valuable. The problem begins when we offload the very parts of the work that are building, testing, or preserving judgment.

The real question, then, is not whether we should use AI; that cat is out of the bag. It’s whether we will use it in ways that preserve the foundations and habits that judgment depends on.

Because the danger is not that these tools do our work for us, but that, used carelessly, they can leave us producing the appearance of strong work without building or sustaining the capability to truly own that work.

The challenge for the practitioner, therefore, is to learn how to create music without surrendering musicianship.

The Practical Challenge

That leaves us with a practical challenge: how do we use AI to capture the gains in speed and quality without outsourcing the very cognitive reps that build judgment in the first place?

The answer to that question is multi-faceted - from how we lead on this issue, to how we manage it, to how we train for it.

In a follow-up piece, I’ll outline a practical framework for using AI in ways that preserve cognitive agency rather than erode it

.